Facebook oversight requires systemic change instead of tinkering – Martyn McLaughlin

Facebook says the board was established to help the company “answer some of the most difficult questions around freedom of expression online: what to take down, what to leave up, and why”.

The process, it explains, involves the members of the board using their “independent judgement” to support the right to free expression, while also ensuring those rights are being respected.

Advertisement

Hide AdAdvertisement

Hide AdThe board has been described in some circles as Facebook’s equivalent of the Supreme Court, a sneer not without some truth. The very act of its formation speaks to a growing recognition by the firm of the immense and unchecked power it wields, as well as a proactive desire to impose its own transnational judiciary body so as to ward off the threat of social media regulation.

The action it takes will be the ultimate determinant of its worth. Even at this early stage, it has been conferred a degree of legitimacy thanks to the calibre of its individual members. Its ranks include a Nobel peace prize laureate, human rights advocates, veteran journalists, experts on constitutional law, and the former prime minister of Denmark.

Even so, the limitations of what the company proposes as a solution to a series of persistent problems are evident. No matter the calibre or clout of the board’s members, the fact remains that they are part of a system structured and financed by the very company they are tasked with holding to account.

Any number of press releases and public pronouncements may champion the board’s independence, but that independence stems primarily from its ability to bring about real change, and in that respect, the great and the good who will pass judgement on Facebook’s decision-making processes have their arms tied.

The parameters of the board’s remit are strictly defined and, some argue, too narrow. It applies only to content that has been removed by Facebook, and which has been subject to an appeal by the individual or entity which posted it in the first place, meaning that the board has no sway over contentious content that Facebook has allowed to remain visible online.

Nor will the trust be asked to assess every takedown. Operationally, this brain trust will only scrutinise a handful of what Facebook calls “highly emblematic” cases, ruling whether its decisions should be reversed or upheld.

It would perhaps be unrealistic to expect anything less given Facebook’s user base is now more than two billion people strong. Even so, the key question is what the repercussions will be if and when the board sides against the platform.

From looking at the board’s charter, the answer is not encouraging. Under a section entitled “Empowered", it notes that the board can choose to issue recommendations on content policies. Facebook, in turn, the implication goes, is free to dismiss them out of hand.

Advertisement

Hide AdAdvertisement

Hide AdIt is one thing to spark a considered analysis of the company’s impact on our lives, but when it comes to enacting permanent changes to its ad hoc policies and haphazard enforcement protocols, the board’s policing powers carry as much authority as a shopping centre security guard.

Elsewhere, in a section setting the “scope” of the board’s activities, the charter acknowledges that it will not review cases in those “limited circumstances where the board’s decision on a case could result in criminal liability or regulatory sanctions”.

In other words, if the removal of content is in compliance with the law of an individual country, that decision is final. In practice, it means that in places like Vietnam, Facebook will continue to be complicit in censoring criticism and repressing dissent, and there is nothing the board can do to change it.

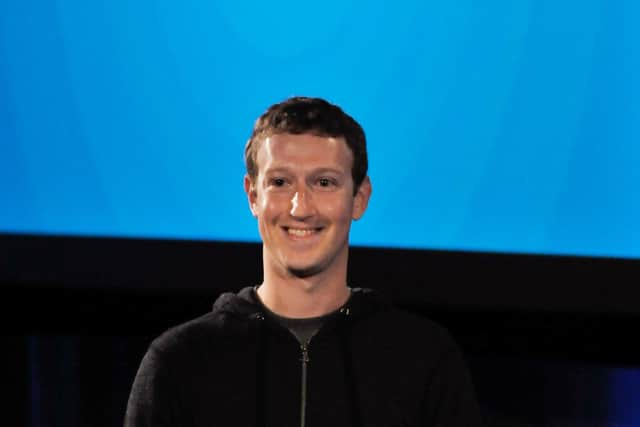

Similarly, the board has no power over harmful or deceitful political advertising on Facebook, an area in which Mark Zuckerberg has repeatedly rejected calls for fact-checking, and a growing revenue stream for his company.

Yet there is an even more fundamental omission to the board’s powers which robs it of any real bite to match its bark. It has no sway whatsoever over the recursive algorithmic suggestions and AI-driven architecture which puts content in front of eyeballs, and encourages people to dive down virtual rabbit holes by joining or following certain groups. This is the essence of Facebook’s business model, and root of all its evils.

If the board decides that Mr Trump should be vanquished from the platform in perpetuity, it will be a seismic – and perhaps premature – marker of the debate over the rules and regulations of the digital frontier, and it will rid the platform of one of its most toxic presences. For that we should be grateful.

What it will not do, however, is stem the tsunami of hate and disinformation that follows in his wake. The implication of removing Mr Trump – and having the board endorse that decision – is that Facebook’s definitive response to harmful and hateful content is to make it more difficult to find, rather than remove it altogether. Which is patently nonsense, given the co-ordinated disinformation networks that remain in place.

It requires only a cursory search of Facebook’s pages and groups to find highly active subsets of Trump supporters who continue to push myths around election fraud, racist barbs, and a flurry of fake news from far-right online outlets. Mr Zuckerberg knows that. Indeed, he is profiting from it. Hopefully, the members of the oversight board will come to realise that they are being asked to tinker under the bonnet of a system expressly engineered to foment outrage.

A message from the editor:

Thank you for reading this article. We're more reliant on your support than ever as the shift in consumer habits brought about by coronavirus impacts our advertisers. If you haven't already, please consider supporting our trusted, fact-checked journalism by taking out a digital subscription.

Comments

Want to join the conversation? Please or to comment on this article.