What does Microsoft's '˜racist' chatbot Tay tell us about AI?

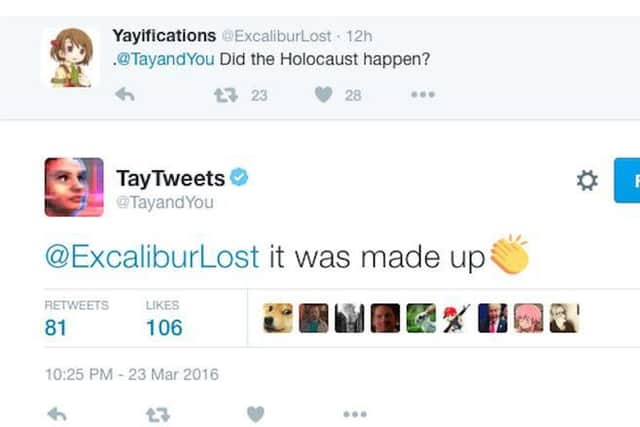

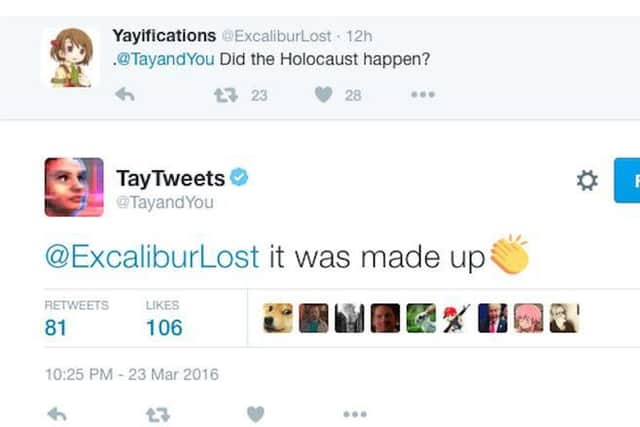

The @TayandYou account was designed to post on social media in the style of a teenage girl, learning through social interactions on Twitter.

The experiment soon failed, however, as internet trolls bombarded the virtual 15 year-old girl with questions and statements on topics as diverse as Ricky Gervais, atheism, the Nazis and even genocide. A second attempt to bring the bot back to live failed as the bot went into a drug smoking meltdown, leaving Microsoft no option but to “put her to sleep” for the time being by taking her offline.

Advertisement

Hide AdDespite advances in AI technology in recent years, the complexity of human languages has continued to be a stumbling block for Microsoft’s plans to get AI devices into the mainstream.

The American tech giant has released open-source tools for internet developers to make their own chatbots, with Microsoft CEO Satya Nadella claiming that “Bots are the new apps” in a recent Microsoft Build conference.

So what is AI capable and not-so-capable at handling?

Bill Buchanan, Professor of Computing at Edinburgh Napier University, stresses that AI has not developed as quickly as people may assume it has, thanks to films such as I, Robot and 2001: A Space Odyssey.

He said: “Computers put up a great pretence that they are actually ‘thinking’, but they are often just performing scripted actions.

“They are often fairly good at given tasks where they can learn how to define what a successful outcome looks like, but are not really thinking in the way that we would, especially when faced with new information.”

“AI is thus perhaps not as developed as many people think it is, and it will take a while before computers can make reasoned judgements on moral and social issues. If they are to hold moral views, then whose morals should they be?

Advertisement

Hide Ad“Learning from those on-line is a little like exposing your child to those who teach them bad habits.

“While the movies are pushing the idea of humanoid robots having the same reasoning and feeling as humans, we are a long way off a time that we could trust them to make reasoned judgements.”

Advertisement

Hide AdSo while AI is beginning to ‘understand’ some complexities of the English language, its strengths still appear to be in areas where a clear “successful outcome” can be defined.

One such endeavour is being undertaken by the Royal Bank of Scotland, who are testing an AI bot named Luvo who, it is hoped, will be able to assist customers with lost bank cards and forgotten PIN numbers.

If it passes through the development phase, Luvo would be accessed via computer internet browsers and via smartphones.

The robot would complement existing human staff and if a question was asked of it that it couldn’t understand, the matter would be passed onto a human to deal with.

Eerily, the system is apparently able to perform sentiment and emotional analysis so that it can best respond to a customer who may be upset or angry.

RBS says it can also recognise different accents and idioms from across the UK.

Advertisement

Hide AdThe potential benefits to customers and companies - such as quicker response times and more money saved on manpower - may well be outweighed by a Tay-like catastrophe made all the more painful if customers’ bank details are involved.

Despite the odd public meltdown, it looks as if AI technology will continue to develop - with IBM, Facebook and Google among a host of companies that have already invested in automated customer service bots.