Scottish scientists find new ways to understand brain

The visual cortex is responsible for processing sight by receiving feedforward input from the eyes. But feedback is also required from parts of the brain that conceptualise and contextualise in order for us to fully comprehend what we are seeing.

So far scientists have been impeded by the integration of both signals within the six different layers of the cortex. No technique had been able to isolate the contextual feedback signal in human cortical layers, until now.

Advertisement

Hide AdAdvertisement

Hide AdResearchers at the University of Glasgow have come up with a solution by taking advantage of the fact input from the retina is mapped out in the visual cortex.

Much like light entering the lens of a camera hits a specific portion of a sensor to form a pixel, so too light entering the eye has a corresponding portion in the visual cortex, which when applied to magnetic resonance images, is called a voxel.

In order to study the feedback signal, the researchers showed subjects a picture – for example a car – part of which was obscured by a white square. This enabled them to identify and isolate the area of the brain that responded only to the occluded portion of the scene, and thus quieten the feedforward signal.

But even in the absence of sensory input the visual cortex communicates with other brain areas.

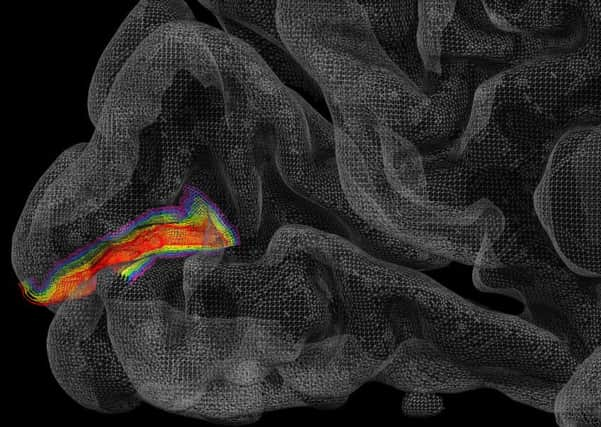

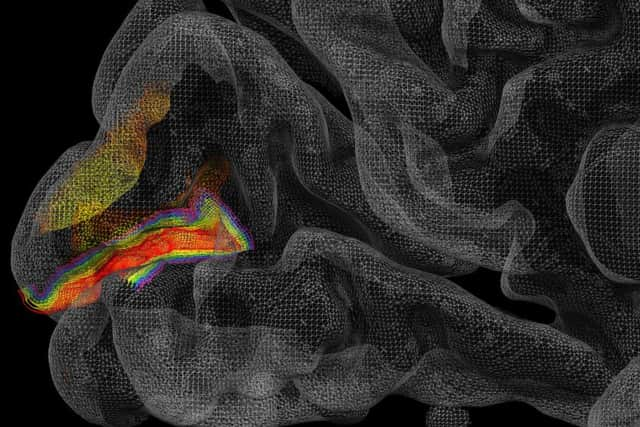

By measuring the activity in this part, the researchers were able to see where feedback and feedforward activity took place across the six different layers of the cortex as the brain tried to complete the picture by inferring what the whole scene looked like. The ability to measure layer specific signals in humans was made possible by 7 Tesla MRI techniques pioneered at the University of Minnesota’s Center for Magnetic Resonance Research. Professor Essa Yacoub and colleagues have developed techniques that allow visualization of human brain activity at sub-millimeter spatial resolutions and with high degrees of accuracy. Such a capability was previously only possible with invasive studies in animals.

The result reveals the layered cortical organisation of external versus internal processing streams during perception, with activity during normal visual stimulation peaking in mid-layers and contextual information peaking in superficial layers.

Professor Lars Muckli, of the Institute of Neuroscience & Psychology, said: “Understanding the brain’s feedback system is important if we are to develop more powerful computers and artificial intelligence systems, but it might also help us to better understand mental illnesses such as schizophrenia and autism.

“The predictive coding hypothesis suggests the brain tries to process efficiently the huge amount of sensory information it receives by creating predictive models of the world based on previous experience.

Advertisement

Hide AdAdvertisement

Hide Ad“For example, the brain expects to see your favourite armchair in the context of your house, so seeing it suddenly sitting in your office would be regarded as unusual.

“In healthy people, the feedback system plays an important role in understanding concepts and contexts in everyday life. But in people with mental health conditions like autism, we believe that something within the feedback system isn’t working correctly. For them everyday scenes that should be regarded as familiar can seem strange.

“Our study provides empirical evidence to support theoretical feedback models such as predictive coding and demonstrates the potential of high-resolution MRI in studying sub-millimetre human cortex.”